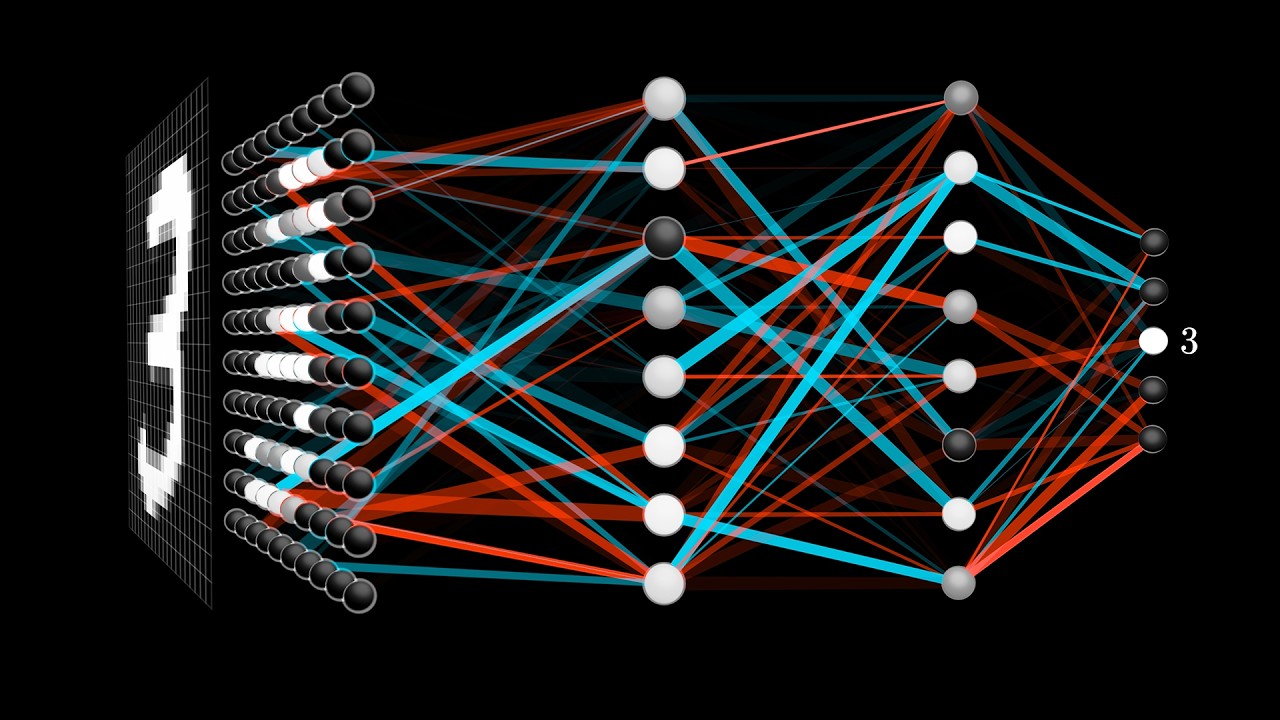

Here, we demonstrate that our model outperforms MANN by a large margin in supervised one-shot classification tasks using Omniglot and MNIST datasets. In order for the network to function in this framework, a new memory-writingmodule to encode label information into the label memory in accordance with the meta-learning task structure is designed. Memory-augmented neural networks 20, 37, 38 proposed the use of memory enhancement methods to solve few-shot learning tasks. Hence, when predicting the label of a given input, our model uses its feature memory unit as a reference to extract the stored feature of the input, and based on that feature, it retrieves the label information of the input from the label memory unit. The feature memory is used to store the features of input data samples and the label memory stores their labels. We revisited the idea of meta-learning and proposed a new memory augmented neural network by explicitly splitting the external memory into feature and label memories. In this paper, we tried to address this issue by presenting a more robust MANN. This often leads to memory interference when performing a task as these models have to retrieve a feature of an input from a certain memory location and read only the label information bound to that location. In models such as MANN, the input data samples and their appropriate labels from previous step are bound together in the same memory locations. MANN is an implementation of a Neural Turing Machine (NTM) with the ability to rapidly assimilate new data in its memory, and use this data to make accurate predictions. Recent work has suggested a Memory Augmented Neural Network (MANN) for meta-learning.

In this regard, various researches on "meta-learning" are being actively conducted. However, many important learning problems demand an ability to draw valid inferences from small size datasets, and such problems pose a particular challenge for deep learning. Download a PDF of the paper titled Meta-Learning via Feature-Label Memory Network, by Dawit Mureja and 2 other authors Download PDF Abstract:Deep learning typically requires training a very capable architecture using large datasets.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed